“How many visits before they buy?” sits at the intersection of two things B2B teams argue about constantly: how much to invest in retargeting, and how aggressively to engage returning visitors. Every team has a theory. Few have the data. The gap exists because most analytics tools cannot stitch a buyer’s multiple sessions across devices and months back to a single person.

I am George, founder of Leadpipe. Deterministic identity matching is what we do. It means we can attach an anonymous visit in January to the same person’s form fill in March to the same closed-won deal in May. This post is the framework for reading the return-visit curve honestly, what it implies for retargeting and outbound, and how the verified Leadpipe accuracy data anchors the question.

The short version

Most B2B buyers do not convert on visit one. The return-visit curve is steep, not flat. Conversion probability rises sharply across the early visits, then flattens with a long tail of buyers who take many visits before they identify themselves. Larger deals visit more. Regulated industries visit more. Most marketing attribution tools credit the last touch, which means the first several visits get zero credit, which means first-touch channels look worse than they deserve.

If you are shutting off retargeting because “the click did not convert,” the framework in this post is the argument for keeping it on.

Why the curve is hard to measure

Three reasons most teams cannot read their own return-visit curve.

1. Cross-device sessions look like different people

A buyer who switches from laptop to phone to work desktop shows up as 2 or 3 different visitors in standard analytics. The curve flattens because the same person’s sessions are spread across three identities.

2. Cookie expiry erases the early visits

A first visit in January looks like an anonymous bounce. A return visit in April, after the cookie has expired, looks like a brand-new visitor. The “first touch” in your CRM is actually the buyer’s fifth visit.

3. The form fill is the only attribution event

Most attribution models treat the first identifiable event (form fill, demo request, free trial) as visit 1. Everything before that does not exist in the dataset. The curve is built on the wrong starting point.

Deterministic person-level identification fixes this. With a persistent identity graph, a session in January and a session in April from the same person resolve to the same record even if the cookie is gone. The curve becomes readable.

What the framework tells you

When you can stitch sessions to people, three patterns emerge across industries and deal sizes.

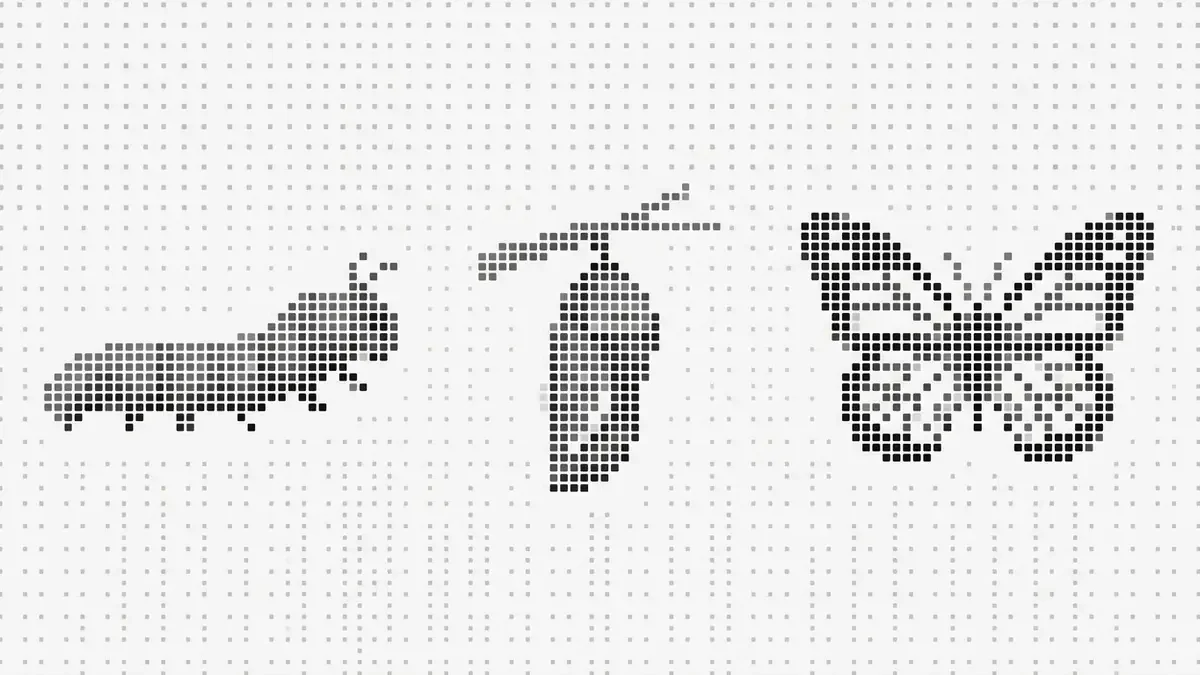

Pattern 1: most buyers return before they identify themselves

The mode is rarely visit 1. Most buyers complete several research sessions before they fill out a form, request a demo, or start a trial. The shape of the distribution is heavily right-skewed: a small share converts on the first visit, a long tail converts on visits 5 through 20+.

This is consistent with the Gartner finding that B2B buyers are 70%+ through their decision before they contact a vendor. Most of that 70% happens on your site without your team seeing it.

Pattern 2: ACV predicts visit count

| Deal size (ACV) | Typical visit count before conversion | Typical research window |

|---|---|---|

| <$10K | Lower, often single-digit | Days to weeks |

| $10-50K | Mid, often a small number of weeks | 2-6 weeks |

| $50-100K | Higher, multiple visits over weeks | 6-12 weeks |

| $100K+ | Highest, often many visits over months | 8-16+ weeks |

Enterprise buyers visit more. That sounds obvious, but the magnitude matters. A buyer signing a six-figure ACV deal often visits more than ten times before any self-identification event, and the research window often spans more than two months. A buyer signing a $5K ACV deal usually converts in days to weeks with far fewer touches.

The practical implication: if your team’s SDR motion is “book a meeting on visit 1,” you are optimizing for the smallest-deal cohort. Larger deals require a return-visit strategy.

Pattern 3: regulated industries over-index on visit count

| Industry pattern | Why visit count is higher |

|---|---|

| Cybersecurity | Procurement plus security review |

| Financial services | Compliance and vendor risk review |

| Healthcare | HIPAA and clinical-evaluation gates |

| Manufacturing / industrial | Long capital-equipment evaluation cycles |

| Pharma / life sciences | Regulatory review windows |

The research curve is longer because procurement, compliance, and review require more evaluation touches before someone is willing to identify themselves to a sales team.

Pages visited by visit number

The pages a buyer revisits shift predictably as the research window progresses.

| Visit position | Most common page type |

|---|---|

| Visit 1 | Blog post, homepage, or paid ad landing |

| Visit 2 | Comparison or feature page |

| Visit 3 | Specific use case, integration, or case study |

| Visit 4 | Pricing or plan comparison |

| Visit 5+ | Pricing again, demo, or signup |

The pattern most often observed: the first visit is content-driven (blog or paid ad landing), middle visits are product-driven (features, docs, comparisons), and the conversion visit is almost always pricing, demo, or signup. That arc is useful for pacing retargeting creative and sales outreach content. Hitting a visit-3 buyer with a case study lands. Hitting a visit-1 buyer with the same case study is wasted.

The implication for your retargeting: do not show the same creative at every visit. The buyer’s question changes between visit 1 and visit 5. Your creative should change with it.

What the verified Leadpipe data says about match rate over visits

A side-note finding that matters for anyone using visitor identification. Match rate per visit is not flat.

The mechanical reason: each return visit adds deterministic signals (same device, same browser context, cross-session cookie persistence, occasional authenticated actions). Match confidence climbs with visit count. This is a feature of deterministic identity graphs, and it is the reason encouraging return traffic directly improves your pipeline visibility even before the buyer converts.

Leadpipe’s average on US B2B traffic sits at 30-40%+ overall. Return-visit-heavy sites cluster above that average because the identity graph has more signal to work with on a returning device. The closest independent anchor we have on identity quality is the Gartner-certified audit of 75,000 visitors over 120 days.

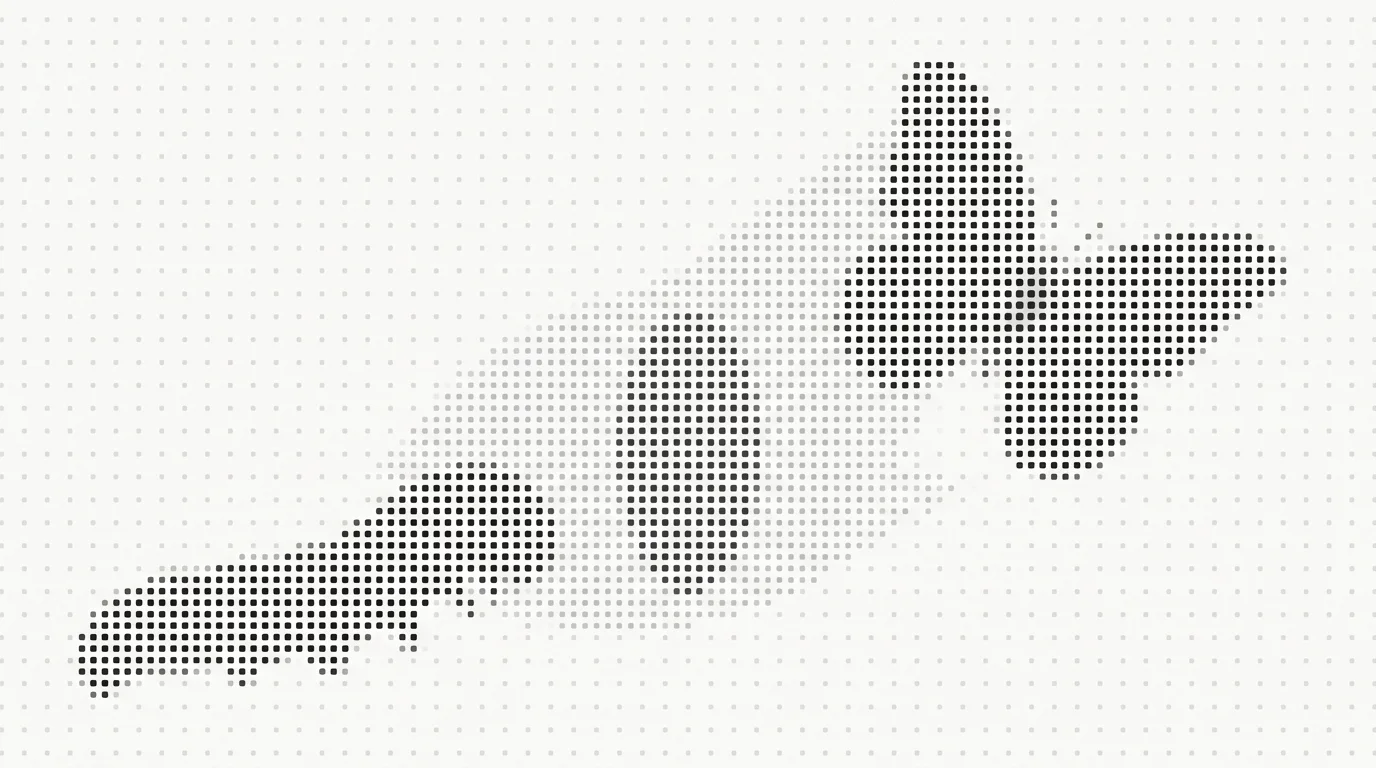

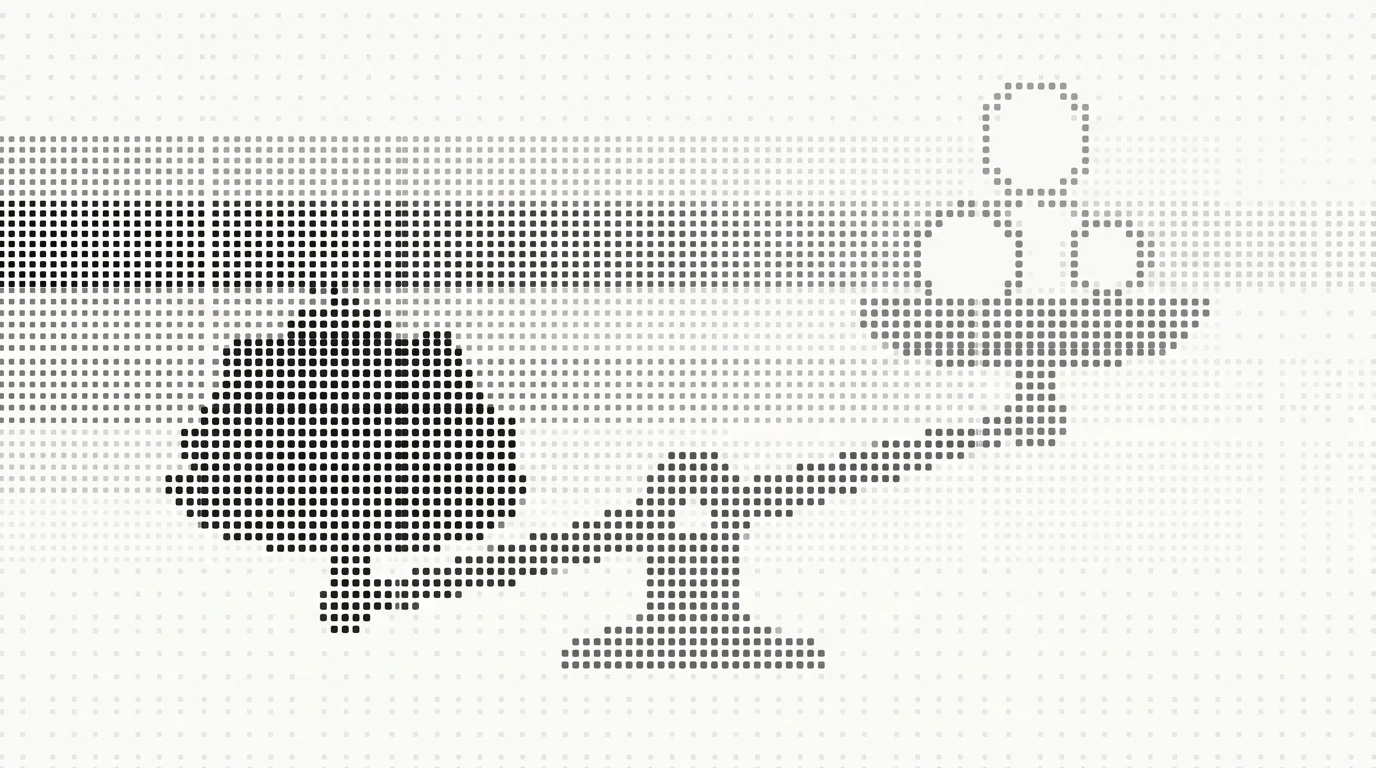

Identification accuracy (independent audit):

Leadpipe ████████████████████ 8.7/10

RB2B ███████████ 5.2/10

Warmly ████████ 4.0/10The accuracy gap matters more on the return-visit curve than on a single visit. Probabilistic tools may guess the same person on visit 1 and a different person on visit 4, breaking the stitch. Deterministic graphs hold the identity across the full session history, which is what makes the curve readable in the first place.

What most attribution models miss

Standard last-touch attribution in Google Analytics, HubSpot, and most paid-ad platforms credits the converting click. If the median buyer takes a handful of visits to convert, that means most earlier visits get credited nothing.

The channels those earlier visits came from (often organic, direct, referral, retargeting) look worse than they are. Paid search gets over-credited because it tends to show up late, right before the form fill. This is the mechanism behind the pattern we covered in Google Analytics is lying about pipeline: your attribution report is a description of your reporting tool, not your buyers.

Three downstream consequences of misreading the curve:

| Consequence | What you do wrong |

|---|---|

| Cut content marketing | Because “blog does not convert,” when blog is visit 1, not visit 5 |

| Tighten retargeting window | Because “anyone older than 14 days does not convert,” cutting off the long tail |

| Over-invest in paid search | Because “paid search has the best CPA,” when paid search shows up late |

| Pull SDR off content-engaged accounts | Because “they did not fill out a form,” yet they will, on visit 7 |

Identification closes the attribution gap by attaching the same person to all of their visits, regardless of which channel referred each one.

Implications for retargeting

Three takeaways for your retargeting strategy.

1. Extend the window to 90 days minimum

A 14-day retargeting window cuts off the long tail of the return-visit curve. Move to 90 days. The ROAS math for that cohort usually wins even at higher frequency caps because the cohort that converts at visit 7+ is disproportionately the higher-ACV cohort.

2. Sequence creative by visit position

A buyer on visit 2 wants a comparison. A buyer on visit 4 wants pricing context. A buyer on visit 7 wants a case study or a security trust signal. Build sequence-aware creative.

3. Score returns, not first-time visits

A returning visitor has self-selected through memory (they came back) in a way a first-timer has not. Put a larger weight on return-visit signals in lead scoring. This is one of the few places where intent scoring is almost binary: a return visit is a much stronger signal than a first visit, regardless of pages viewed.

Implications for the SDR motion

Three takeaways for outbound.

1. Treat return visitors as higher priority than first-timers

If your SDR queue is sorted by recency only, you are surfacing first-time visitors equally with returning visitors. Sort by visit count, not just recency. Returning visitors close at materially higher rates because they have already self-qualified through memory.

2. Reach out when the deterministic identification fires, not on form fill

Visitor identification surfaces a returning buyer well before they self-identify with a form. Match rate is 30-40%+ on US B2B traffic, which means a meaningful share of return visitors become contactable before they hit a form. If you wait for the form fill, you waited out most of the research window. See what to do when someone visits your pricing page for the specific workflow.

3. Match outreach content to visit position

The buyer’s research question shifts as visit count climbs. A buyer on visit 2 wants help understanding the category. A buyer on visit 5 wants help understanding their plan tier. A buyer on visit 9 wants help getting internal approval. Outbound that does not match the visit position lands flat. The midbound playbook covers this in more depth.

How to instrument this on your own site

If you want to read the curve on your own buyers, four inputs.

| Input | Why it matters |

|---|---|

| Person-level identification | Stitches sessions to humans, not cookies |

| Cross-device persistence | Catches the laptop-to-phone-to-desktop pattern |

| Visit-numbered events in your CRM | Allows downstream cohort analysis |

| Closed-won flag tied to identity | Lets you build the cohort retroactively |

Hold each field constant for at least 90 days before reading patterns. The signal is real but the volume per cohort takes a few months to accumulate on most B2B sites, especially in the long tail of high-visit-count buyers.

Limitations to be honest about

Three.

- Closed-won bias. You can only read the visit count for buyers who eventually bought. Lost-deal buyers visit more, probably significantly more, but you cannot prove it inside a closed-won cohort alone. Building a “visited but did not buy” comparison cohort is harder and almost always undercounts.

- Cross-device stitching is imperfect. A buyer who switches from laptop to phone to work desktop may show up as 2 or 3 buyers rather than 1 even with deterministic stitching. The undercount is real; the direction (a buyer’s actual visit count is higher than what you measure) is consistent.

- Pixel coverage window. You can only count visits that happened after the pixel was deployed. Early-market buyers in the cohort may have visited before you could see them.

What changes if you start reading the curve

A team that starts reading the return-visit curve usually changes four things in the first quarter:

- Retargeting windows extend.

- Paid-search budget gets reallocated toward middle-of-funnel content.

- SDR queues get re-sorted by visit count, not just recency.

- Lead scoring weighs return visits higher than first visits.

Each of those moves is small in isolation. Together they shift the team’s center of gravity from “convert today’s visitor” to “stay in front of a buyer over a research window.” That shift is the structural advantage of reading the curve honestly. The teams still optimizing for first-visit conversion are competing for the smallest, fastest-decaying slice of the pipeline.

Leadpipe identifies 30-40%+ of your US B2B visitors with full contact data on the Pro plan at $147/mo. No credit card to start the 500-lead trial. Start identifying visitors →