Is lead scoring dead? That is the question I keep getting. My short answer, after six years of running it, two years of trying to fix it, and one year of replacing it with something different: yes, the traditional lead scoring model is dead. Something better took its place.

I am George, founder of Leadpipe. This post is about why we stopped scoring leads as entities and started scoring moments as events. The distinction sounds semantic. It is not. Once you make the switch, you cannot go back.

The short version

A lead score is an average over a person’s entire relationship history. A moment score is a snapshot of this specific visit, this specific week, this specific research pattern. Averages hide the thing you actually want to act on: the live buying window.

Every lead scoring system I have ever used eventually ran into the same wall: the score does not change fast enough to tell you when to reach out. Scoring the moment instead of the lead fixes that.

What is wrong with traditional lead scoring

Four problems, built into the model.

1. The score averages across time

Traditional scoring is cumulative. Every action adds points. Page views, webinar attendance, email opens, form fills. The result is a score that reflects the person’s lifetime engagement, not their current buying state.

A lead who was hot six months ago, cold since, and came back to your pricing page yesterday looks high-scoring because of the residual points from six months ago. A brand-new visitor who just hit pricing for the first time looks low-scoring because they have no history.

Both of those are wrong. The returning lead is lukewarm. The brand-new visitor is hot.

2. Negative signals are underweighted

Most scoring systems are weak at decay and negative signals. You can set a decay rate, but in practice it is rarely tuned well. People who disengage for 120 days still show up with elevated scores. The score does not know the window has closed.

3. The weights are guesses

When was the last time anyone at your company ran a real statistical calibration on the lead score weights? “Visited pricing = 10 points, opened email = 2 points, attended webinar = 15 points.” Why 10? Why 2? Why 15? In most marketing automation platforms, the weights were set by the marketer who built the system and never revisited.

The glossary page on lead scoring is clear-eyed about this. It is a heuristic, not a measurement. Heuristics drift.

4. The score is a person, not a context

This is the deepest problem. The score is attached to a record. But buying is not a property of a record; it is a state of context. The same person at the same company can be out of market Monday and in market Thursday. The record did not change. The context did.

The lead score has no way to represent context. So it collapses the state into an average and ships it to your sales team as a qualification.

What a moment is

A moment is a finite, observable window where a specific person, at a specific company, is doing a specific thing that indicates buying intent.

Examples of moments, in order of increasing signal strength.

- Research moment. Person researches the category across the web (picked up by Orbit person-level intent).

- Discovery moment. Person arrives on your site for the first time, from an organic or referral source.

- Evaluation moment. Person views a VS post, a competitor comparison, or a product deep-dive.

- Consideration moment. Person visits the pricing page or the enterprise call to action.

- Commitment moment. Person starts a trial, books a demo, or sends an inbound email.

Each moment has a score. The score reflects the strength of the signal, the recency of the signal, and the fit of the person. Moments expire. A pricing page visit from three weeks ago is not the same signal as one from three minutes ago, even from the same person.

This is what we mean by “scoring moments, not leads.”

How moment scoring works mechanically

Four inputs.

- Fit. Does the person/company match ICP? Title, seniority, company size, industry, technographics. This is static, or near-static.

- Intent strength. How strong is the current behavior? Page type, dwell time, pattern, Orbit topic match.

- Recency. How long ago did this happen? Decays quickly. A moment from today is worth a lot. A moment from 30 days ago is a historical footnote.

- Continuity. How does this moment connect to prior moments? Third visit in a week scores higher than first visit in six months.

We express a moment score as a number between 0 and 100, recalculated every session, decayed every hour. It is not a property of the person; it is a property of the session in the context of the person’s recent behavior.

A simple picture:

Moment score (0-100) over 30 days for one person:

Day 1: first visit, blog post ▮▮ 12

Day 4: returned, read VS post ▮▮▮▮▮ 28

Day 7: visited pricing, 2 min ▮▮▮▮▮▮▮▮▮▮▮ 64

Day 8: visited pricing again ▮▮▮▮▮▮▮▮▮▮▮▮▮▮▮ 88

Day 9: (no activity) ▮▮▮▮▮▮▮▮▮▮▮▮▮ 76

Day 14: (no activity) ▮▮▮▮ 21

Day 28: (no activity) ▮ 5The score peaks at the behavior, decays with time, and revives when new behavior occurs. That is what your sales team needs to know. Not “this person is a 74/100.” But “right now, today, this person is a live 88.”

What changed when we switched

The headline change is that meetings-booked-per-alert went up meaningfully once the alert reflected a live moment instead of a cumulative score. The more important changes are qualitative.

Sales trust in the signal went up. When a Slack alert says “live 88,” the AE opens it. When the alert is a static MQL score, the AE quietly ignores it.

The SDR’s first message got better. Because the alert references what the person is doing right now, the email writes itself. “You looked at pricing twice this week” is a better opener than “Based on your engagement score.”

Marketing and sales stopped fighting over MQL quality. The fight was always downstream of the model. If the score was a moment, not a person, there was nothing to argue about. The moment either happened or it did not.

Pipeline coverage got more honest. Instead of hundreds of “MQLs” rotting in SDR queues, we had a much smaller weekly list of live moments worth acting on. Smaller list. Higher conversion. We saw the same pattern in our BDR postmortem.

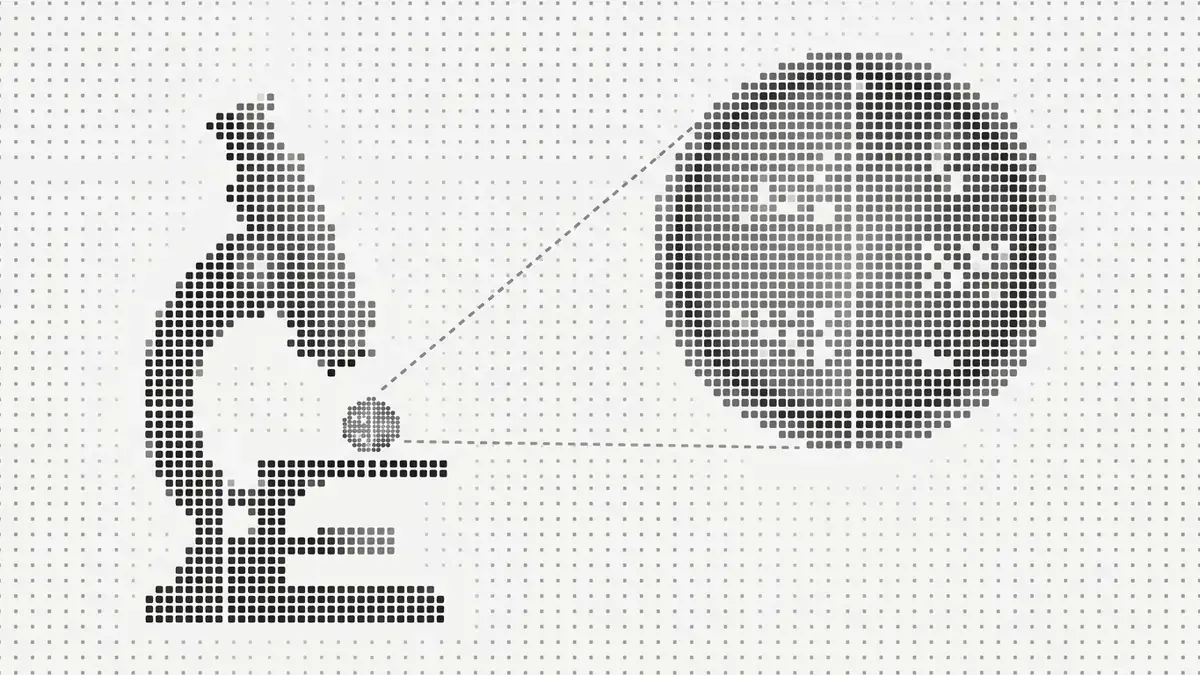

What you need to score moments

Three layers.

Layer 1: Identity

You cannot score a moment for an anonymous visitor because you cannot stitch the sessions together. Identification is the prerequisite. Once a visitor is identified by Leadpipe, every future session is attached to the same identity. Continuity is automatic.

Layer 2: Behavior

You need per-session signals richer than a page view. Time on page, scroll depth, exit point, sequence through the site. Most analytics tools log these. The trick is keying them to identity rather than to anonymous sessions.

Layer 3: Off-site intent

On-site behavior is only half the picture. The other half is what the person was doing before they got to your site. Orbit adds person-level intent from research across 5M sites. When a person is on an Orbit audience AND visits your pricing page, the moment score doubles.

That combination, on-site behavior + off-site intent, keyed to a persistent identity, is the scorable unit. This is the shape of modern buyer intent.

How we set moment scores in practice

The weights are not secrets. Rough shape of our model, in plain English.

- Fit (0-20 points). Title + seniority + company size + industry match to ICP. Contributes but does not dominate. A CFO at a target account who never comes back is not a hot moment.

- Base behavior (0-30 points). Page type and dwell time. Pricing = +15, product = +10, blog = +3, etc.

- Continuity (0-20 points). Second or third visit in a week adds meaningfully.

- Off-site intent (0-20 points). Orbit research on relevant topics in the last 14 days.

- Negative signals (0 to -30 points). Competitor domain, existing customer, student email, bounce indicators.

Sum, clamp to 0-100, expose to Slack and CRM. Decay over 14 days.

Your model will differ. The point is not the weights. The point is that the unit of scoring is the current moment, not the cumulative record.

Where traditional lead scoring still works

I do not want to overstate this. Traditional lead scoring has use cases.

- Marketing reporting at a coarse level. “How many leads did we generate this quarter?” The lead score is a passable filter.

- Lifecycle segmentation in email. Long nurture sequences that gate content on engagement tiers. The score is a gate, not a trigger.

- ABM account-level aggregation. Rolling individual scores up to an account-level score is a reasonable dashboard metric.

These are valid. But none of them is the sales timing decision. For the sales timing decision, you want the moment.

What it means for your marketing ops

Three shifts.

1. The MQL stops being the hand-off. The hand-off becomes “a moment crossed threshold X in the last Y hours.” Your MQL can stay, but as a reporting roll-up, not a trigger.

2. Slack becomes the interface, not the CRM. Moments are ephemeral. They belong in a feed, not a queue. Slack alerts are how AEs see them.

3. The scoring model lives in the identity layer, not in marketing automation. Marketing automation was built around the record. The identity layer is built around the person-session pair. Move the scoring to where the real-time signal lives.

What we would do differently

- Started earlier. We ran traditional lead scoring for too long after the cracks showed. We should have started the moment-based pilot a year sooner.

- Educated sales before deploying. The moment-based signal is different from what AEs are used to. Invest in training on the first week, not the fourth.

- Published decay curves. Marketing ops cared about decay. Sales did not know it existed. Show decay in the Slack alert itself.

- Tied moment scores to CRM fields. We initially kept moments in Slack only. Piping the max-moment-in-last-14-days as a field on the lead in the CRM was worth it.

The bigger picture

Fit is not intent. Fit plus intent is a prospect. Fit plus intent plus a live moment is a meeting. That is the ladder. Traditional lead scoring flattens the third step. Moment scoring preserves it.

If the rest of your stack has caught up to live signals (intent data, identified visitors, real-time webhooks), the last thing holding you back is a scoring model that treats people like static records. Stop doing that. Score the moment.

Leadpipe identifies 30-40%+ of your US B2B visitors with full contact data on the Pro plan at $147/mo. No credit card to start the 500-lead trial. Start identifying visitors →